Trumpet Harmonizer

ECE 5725 - Design With Embedded Operating Systems

A Project By Henry Geller (hng8) and Bobby Haig (rdh247)

Demonstration Video

Introduction

This is the Trumpet Harmonizer, which is a device that a trumpet player can use to harmonize with themself in real time. Similar devices exist, such as guitar pedals, but are usually exclusive to electric instruments, and alter the signal before it is amplified and played through speakers. However, this task is more difficult for instruments like the trumpet or the human voice, since there are no wires or electric components. We were inspired by certain devices that achieve this idea in some way, such as the vocoder or Jacob Collier's custom harmonizer. Additionally, effects pedals are known to be used on the sound of a trumpet, but they typically change the timbre of the sound or add reverberation, and we did not find any instances of any harmonizing effects aside from an octave pedal. Our project achieves harmonization for standalone instruments like the trumpet, albeit with many opportunities for future improvement.

Project Objective:

We wanted to create a device that can allow a trumpet player to harmonize with themself in real time. A microphone senses the output from the bell of a trumpet, and the audio is processed and pitch-shifted by a Raspberry Pi, and then the pitch-shifted audio is output to a set of speakers so that both the original sound from the trumpet and the pitch-shifted output from the speakers sound in harmony. We also take input from the user via a touch slider to determine the shift interval.

Music Theory Background

The explanation for why the touch slider is desired as the method of inputting a pitch shift amount requires a bit of music theory knowledge:

The concept of pitch-shifting relies upon the idea of a musical interval. An interval is defined as the distance between two musical notes, and this distance is expressed in units of "semitones", which is basically how many notes there are

in between the two notes. The most basic interval is an octave, which is defined as two notes whose frequencies are separated by a factor of two. In Western music, an octave is divided up into

twelve different pitches, and thus there are twelve different types of intervals, because once an octave has been reached, then the list of intervals repeats.

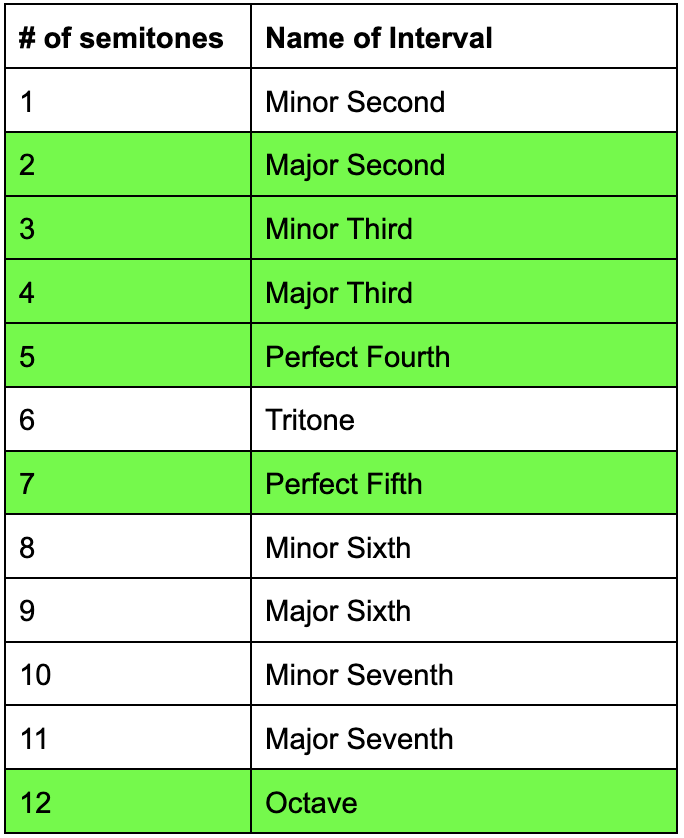

Table 1: The 12 Musical Intervals (Our Trumpet Harmonizer Uses the Green Intervals)

Out of the twelve possible intervals, we knew we would only be able to choose a subset of them to use with the touch slider, so we chose the ones that would be most commonly used for a two-part harmony (shown in green in the table above). However, rarely in music is there a two-part harmony where both parts move in perfect parallel motion with each other. The idea of parallel motion is when two notes sounding in harmony both move by the same interval at the same time. If our amount of pitch-shifting were constant, then we would only be able to achieve parallel motion, where a change in the input note causes the same change in the output note. This would be great if we were playing rock guitar (a prime example of parallel motion), but we wanted to have more musical versatility than that. In order to achieve this, we wanted the ability not only to choose between several intervals but to switch between those intervals instantaneously, as easy as pressing down a valve on the trumpet. This is necessary because for any simple melody and simple harmony that can accompany it, switches between the most common intervals are necessary. In the video above, a short melody was played to demonstrate the harmonizer. The harmony chosen to accompany the melody was a third above the melody, which required changing the value of the pitch shift between a minor third and a major third as the melody is played.

Design

Audio Input/Output

We make use of the PortAudio C library for sound input and output through our USB microphone and audio jack speakers. We knew we needed to use C since we needed high speeds and low latency. PortAudio has many audio-related functions - we started off just with the ability to concurrently record audio with the microphone and play back the audio unchanged via a stream as if the microphone and speakers were connected via a wire. Per PortAudio's website, "A Stream represents an active flow of audio data between your application and one or more audio Devices." PortAudio offers two ways to process the audio and communicate between the input source and output. The first method is through the Read/Write method, where PortAudio provides a synchronous read/write interface for acquiring and playing audio. We do not use this method, as PortAudio admits that there is high latency associated with it. Instead, we use a callback function, which is periodically invoked by the stream every time PortAudio needs your application to produce audio data, making it run effectively as a while loop. We use the Pa_StartStream() function in the library to start the stream, and once the stream is running then the callback function is called continuously. The stream passes pointers to buffers which hold the audio data to the callback, so the audio can be processed and sent back to the stream as output. We handle all of our pitch-shifting logic within the callback and give the stream the altered audio.

Pitch Shift

The first step in our processing sequence is to detect the frequency of the note that is being played on the trumpet. We achieved this by performing a Fast Fourier Transform (FFT) on the

samples from the microphone. The FFT uses the idea that all signals are made up of a superposition of sine waves of different frequencies, and its output is the frequencies detected and their magnitude. Unlike other languages like Python

with easy libraries with access to FFTs (in Python np.fft( ) works well), there are fewer options in C. We base our FFT model from Professor Hunter Adams' FFT handout

to perform the operation. We use a buffer of size 4096 sound samples, and the result of the FFT is 4096 frequency bins, where each bin represents the frequency Fs/4096 where Fs is the sample rate (44.1 kHz in our case). 4096 was chosen

as the buffer size because the FFT needs a number of samples which is a power of 2, and we needed the resolution (distance between adjacent frequency bins) of the FFT to be around 10 Hz, which is the smallest gap between notes at the low

end of the trumpet's range. Had we picked 2048 as the buffer size, then the frequency bins would be around 21 Hz apart, and multiple notes would have corresponded to the same bin, so we would not have been able to distinguish between them.

Once the FFT has finished, we find the maximum frequency by looping over all the frequency bins and determining which one has the highest magnitude.

Unfortunately, in the end, this way of detecting the peak frequency of a trumpet note turned out to not always produce the desired result. As described earlier, the Fourier transform treats an audio signal as a superposition of sine waves of

different frequencies and computes their relative magnitudes. Therefore, if the input audio is only a sine wave with some frequency, then the FFT will always detect the frequency. However, the sound wave coming out of a trumpet consists of

sine waves of several frequencies (if it didn't, then it wouldn't sound like a trumpet). The note being played is called the fundamental frequency, but there are also sinusoids of higher frequencies called harmonics which are also audible.

What we discovered upon testing was that sometimes the FFT would detect the second harmonic as the peak frequency instead of the fundamental for just a few iterations, and then return to correct functioning. This fluctuation had a drastic impact

on the output because the detected frequency was jumping by an octave intermittently, causing the output to also jump by an octave at the same time. We ended up getting around this issue by limiting the range of frequencies over which we

searched for the peak frequency to only one octave. Thus, the second harmonic of any note we tried to play would be outside the range of where we were looking for the peak frequency. Unfortunately, the tradeoff for this was that the range of

the trumpet for which the harmonizer functions is limited to the following range:

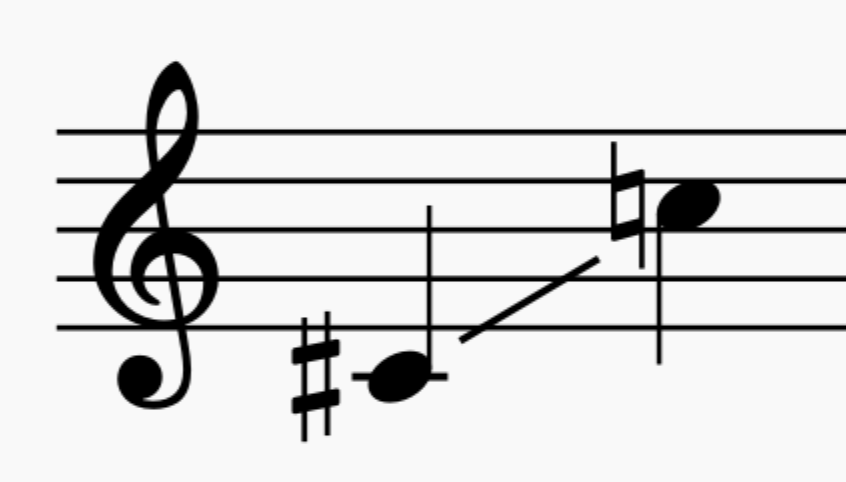

Image 1: The Input Range of our Harmonizer

Another issue we encountered, similar to the octave-jumping problem, was that the peak frequency detected by the FFT would often alternate between two adjacent bins, even when the sound of the input note was seemingly unchanged. We got around

this issue by using a sort of averaging over five consecutive peak detections. In this algorithm, we treated detected frequencies within one bin of each other as the same frequency, and then computed the mode out of the five most recent detections

given that modification. This enhancement had a significant impact on the output sound, eliminating a lot of the noise and fluctuation that was previously present.

Once all of this work was done to compute the frequency of the input note, this information was combined with the input value from the touch slider to determine the number of bins which the FFT output should be shifted before computing the

inverse FFT (iFFT). Since there is no simple algorithm which could compute the shift amount from the given information, we used massive if-else statements to determine the shift amount. The final step in the frequency domain was to perform

the shifting by the given shift amount, and then we also zeroed out the bins with the lowest frequencies, because there was a lot of low-frequency noise otherwise. Once we had the shifted frequency domain data, we performed the iFFT to return to

the time domain so that the pitch-shifted audio could be output to the speakers.

Touch-Slider Input

Image 2: Closeup of the External Hardware. Blue Capacitive Touch Slider on the Left Soldered to a Breadboard on the Right

One of the most arduous parts of this project was achieving the communication between the capacitive touch slider and the Raspberry Pi. We knew when we bought the touch slider that it communicated using

I2C, and it had an extremely simple set of pins: power, ground, data(SDA), clock(SCL), and an interrupt wire to signify a new touch event (we didn't use this pin). We also knew that the Raspberry Pi had GPIO pins meant for use with I2C, so we expected the setup to be quick.

Alas, it was not. Our initial attempts to read data from the touch slider through the command 'i2cget' in the command line only returned nonsense. After a long time of reading through datasheets and online forums, we concluded that it would be

simpler to have the touch slider communicate with an Arduino than with the RPi. The particular touch slider we bought was designed for use with an Arduino, and there were already existing Arduino libraries for reading data from the slider. Thus, we

were able to connect the four wires from the slider into the corresponding input ports on the Arduino, and given the code provided to us in the library, were easily able to read data from the slider. From there, we used a USB serial connection between

the Arduino and RPi, and we were able to read the slider data into our C program using the read() function. Another significant bug that we experienced had to do with this read() function. Our desired functionality of the harmonizer was that when

the slider was not being pressed, then there would be no audio output to the speakers. The read() function achieved this functionality because when the slider was not receiving any touch input, the read() function would block, and the callback

function would not run. Once the slider was receiving touch input, then the callback would continue to run. The problem with this was that during the time the read() function was blocking, input audio data was somehow being stored somewhere, so

that when read() would unblock, the speakers would play audio that was recorded during the entire time that read() was blocking. We fixed this issue when we found the poll() function, which would wait only up to 20 milliseconds for input from the

touch slider, and then if there was no input after that time, then the read() would be skipped over for that iteration of the callback, and an array of zeroes would be passed to the speakers so that there would be no sound output. At the same time,

we also found the tcflush() function, which allowed us to discard unwanted output values without actually outputting them.

One final thing to note about the touch slider was that the data provided by the slider was a value in the range 0 to 100 which signified the location of the touch on the slider. However, we only wanted the slider to give six different values

rather than 100, so we discretized the 100 possible values into six different bins, each corresponding to a shift interval:

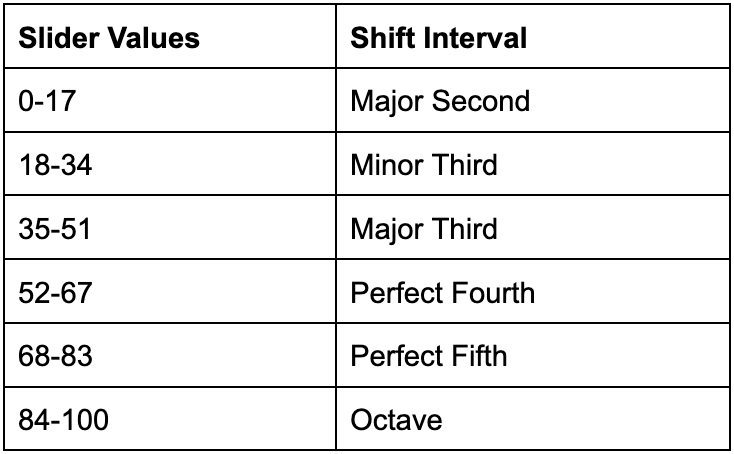

Table 2: Discrete Bins of our Touch Slider

Drawings

Hardware Diagram

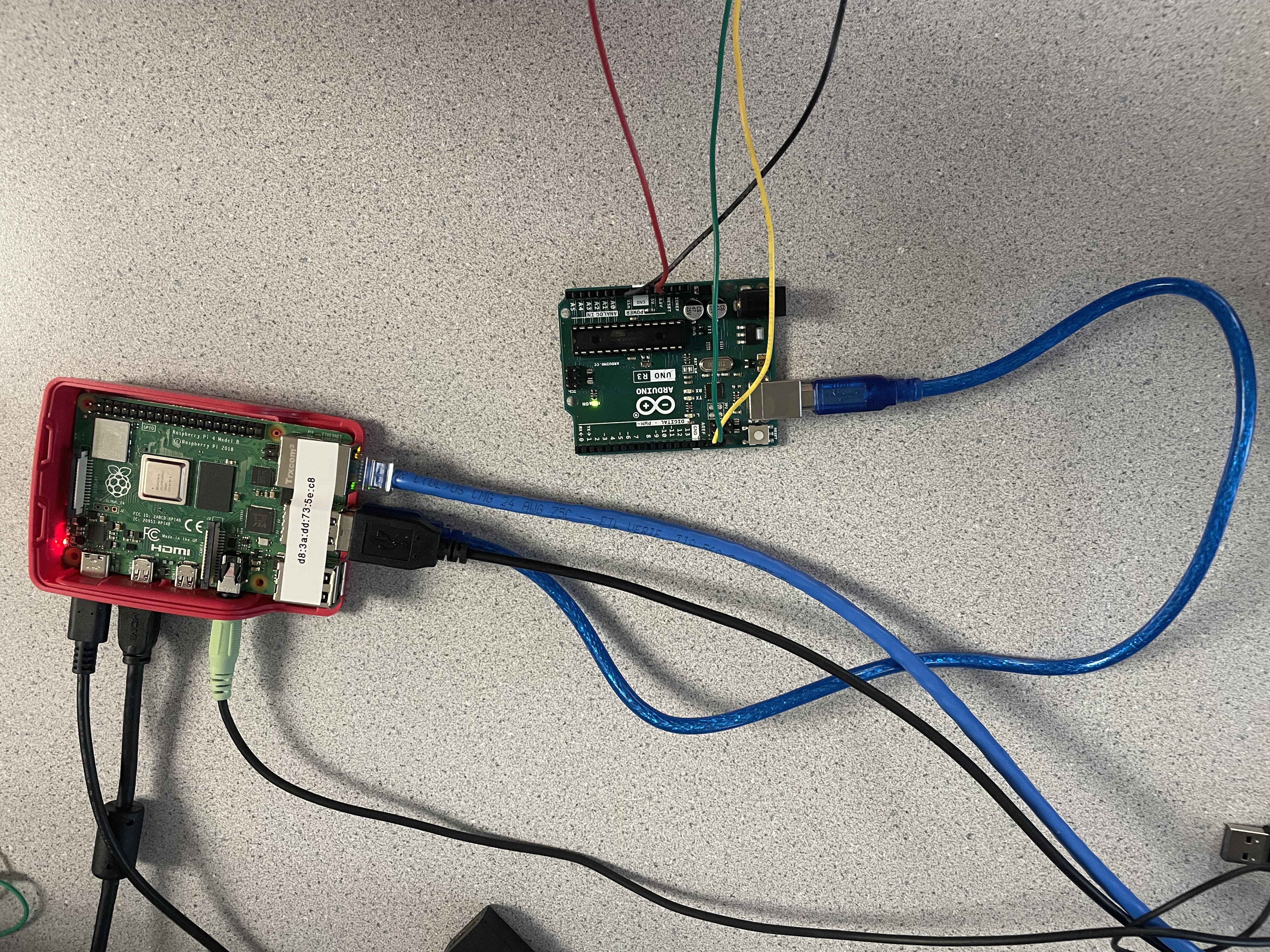

This diagram details the hardware that makes up the Trumpet Harmonizer. We use an Arduino to take input from our capacitive touch slider via I2C and the data is passed via a USB connection to the Raspberry Pi. The Raspberry Pi also receives measurements from the USB microphone and is connected to speakers via an audio jack to output the processed audio data.

AudioProcess Program Diagram

This diagram shows the logic behind the audio_process_v2.c program. We use C over other languages like Python to increase speed and decrease latency. The program initializes a stream via the PortAudio library which receives input from the USB microphone and outputs to the speakers. The stream repeatedly calls our wireCallback function, which immediately performs an FFT on the new audio data. The callback then polls our serial input to see if there is any data coming from the touch slider. If not, we do not want to output anything, so we write zeroes to the output buffer and start a new callback function. If there is data, we read it, and shift the FFT accordingly (as described above). The iFFT is performed to return to the time domain, the result of the iFFT is sent to the output buffer (which then is passed to the speakers via the stream) and the stream recalls the callback function to start the process again.

Testing

The testing of the Trumpet Harmonizer was in multiple stages as our project expanded and evolved in complexity. Testing involved working through elements of different C libraries, experimenting with code we wrote, and measuring the effectiveness of our external hardware components.

First, we needed to make sure audio would work on our current Linux operating system, as we knew there were issues with certain releases. We tested this by obtaining a USB microphone and a studio pair of computer speakers from the course staff, and we were able to record audio and play it back using the 'arecord' and 'aplay' commands.

We then conducted extensive research on audio libraries that would help us achieve our goal of concurrently recording + outputting audio. We settled on the PortAudio C library but needed to test it to see its effectiveness of streaming audio. We were optimistic about this library as it was explained as something that would allow us to concurrently record through a microphone and output to speakers, and would allow us to process that audio. However, we were discouraged when going through some of the basic example programs did not work as expected when running them on the Pi. We then ran some of the test files, specifically patest_wire.c, which implements the PortAudio stream through callback functions. We were excited when this worked as expected, but with some noticeable latency.

Our next phase of design was to implement the FFT to detect incoming frequencies from the microphone. As described above, after the FFT was completed, we looped through the frequency bins to determine which one had the largest magnitude, which would tell us the principal frequency that was being detected. We tested the effectiveness of the FFT by recording a sine wave from an online tone generator using the microphone using, and inputting the values into the FFT (at the time 2048 bins). The output that we got from the FFT seemed like nonsense. We realized that we had neglected the imaginary terms in the DFT. Although the audio we record is only in the real domain, the imaginary coefficients are used and impacted in the FFT algorithm, so it would be incorrect to set them to zero in each iteration. Once we changed the code to reflect this realization, we were able to detect the correct frequency of the incoming sine wave.

Our next phase of testing was with regard to our capacitive touch slider. As the touch slider communicates via I2C, our initial testing was with Linux I2C commands. Once the device was connected to the Pi (via SDA, SCL, 3.3V, and GND ports), we ran the 'i2cdetect -y 1' command, which successfully told us that the Pi detected a peripheral at address 0x31, which was consistent with the documentation for the touch slider. We ran into issues when running the 'i2cget' command. Reading through the documentation of the ATTiny841 (the controller on the capacitive touch slider), we knew what register was the data-address, but could not get consistent readings. We realized that instead of providing us with the location of a touch on the slider, the command was returning a consistent rotation of hex numbers. We also tested the wiringPi library to read I2C values from a C file, but the library seemed outdated, so we abandoned this idea.

As described above, we switched to reading I2C data from an Arduino, since the library provided by TinyCircuits included specific Arduino code for reading the incoming I2C data. We tested this using the serial monitor on the Arduino, where we printed the incoming data. This was successful, as we saw the monitor display a value from 1-100 based on the location of the touch.

Image 3: Arduino to Raspberry Pi USB Connection

The addition of the Arduino added another layer of testing: the serial communication to the Raspberry Pi. Testing this was simple: we created a new C program with example code (linked below in references) which set up the serial port and read data from it. The harder part was testing recieving data within the audio_process program. The difficulty was not due to the actual communication between devices, but because of the impact that it had on our program. The first time we tested the serial communication within our callback function, we noticed that there was incredible latency - sometimes the audio was played by the speakers 30 seconds after it was recorded. Through placing print statements all around the setup of the serial ports and reading the serial data, we realized that the issue was that the read() function which was reading data from the USB was a blocking statement. Audio input was being written to the input buffer, but it was never reset since the callback function was paused. We were essentially storing audio data, waiting for the touch slider to be touched, and then playing back everything that had been saved, which was a problem. We tested by placing our finger on the touch slider as soon as we started the program, and with slightly more latency than before, the program operated as it did before. To get around this we needed a way to make the read() function be non-blocking. The first thing we tested was to change the properties of the open file description which did not work for us. Each read resulted in an error instead of a value from the touch slider. The next thing we tested was the poll() function, which waits for an event on a file descriptor. Our idea was that based on the return value of poll(), we either would read (if there was data ready) or skip the read function, making it effectively non-blocking. By definition, this poll a blocking function itself, but has a timeout feature. We initially set the timeout feature to 1ms, which worked in skipping the read() function, but was too small of a window to receive data, so read() was skipped even when we were touching the capacitive touch slider. We iteratively tested different timeouts as we wanted the smallest number where we read every incoming location value from the slider, which was 20ms.

The last thing that we needed to test was the system using a trumpet. For the duration of the project, we tested using the online tone generator linked above for its convenience and to be respectful of other groups. As expected, the output sound that we got when using the trumpet was not as clean as when we were testing with pure sine waves. The FFT detected the principal frequency was often all over the place due to the nature of the trumpet's harmonics. Additionally, since the sound was not as pure of that from a sine wave, the frequency bin with the highest magnitude often changed between adjacent bins. We were able to see this by printing the values of the frequency bin that the FFT detected, and comparing it with the known frequency of the note that Bobby played on the trumpet. As described above, we solved this issue by throwing out detected frequencies in higher bins (which we assume to be false measurements) and taking the mode of the incoming principal frequencies with a buffer of ±1.

Result

At a high level, we accomplished our goal that we set from the start. The device successfully transforms time domain audio input into the frequency domain, shifts the frequency based on user input from a touch slider, goes back into the time domain, and plays back to the speakers as we drew it up in the design ideation stage. Our idea was very ambitious as it had a lot of moving parts, and we are proud we were able to get it working as a complete system.

The shortcomings, however, are quite noticeable. Mainly, the pitch-shifted sound that gets output to the speakers does not sound very much like a trumpet (as anyone else in the lab during our testing stages can attest to). While the output is just a filtered version of the input, it is clear that the filtering process changes the timbre of the trumpet significantly, and this would be the main focus of any future improvements to this prototype. Some steps that we would take to improve upon this would include exploring other pitch-shifting algorithms other than the shift in the frequency domain that we implemented. In our research, we encountered alternative algorithms which take place in the time domain which may have produced better results.

Another possible area of improvement is latency. in our prototype there is a noticeable latency in two different ways. First, there is the latency of audio pass-through from microphone to speaker. Even before we implemented any pitch-shifting algorithm, the initlal example program from PortAudio was giving us a latency of around 0.2 seconds, which was already higher than desired. The addition of the frequency analysis code in our program affected this slightly but in a way that was barely noticeable. What did have an additional significant effect on the latency, however, was the process of smoothing out the output by using a type of averaging over recent detected frequencies. This did not change the latency From microphone to speakers, but added latency from note change on the trumpet to note change on the harmonizer. This latency was about 0.3 seconds. These combined latencies would need to be significantly improved in order to use this product for serious musical applications.

Additional future work on this project may include using multiprocessing to reduce latency, 3D-printing something that would hold the electronic components onto the trumpet, and expanding the range of allowed notes.

Work Distribution

Project group picture

Bobby Haig

rdh247@cornell.edu

Henry Geller

hng8@cornell.edu

We divided the work for the project equally. All lab sessions were attended by both members who contributed by designing the overall system, programming, reading documentation, researching libraries, painful debugging, and rigorous testing.

Parts List

- Arduino Uno $30.00 (Owned by Henry)

- Trumpet (Owned by Bobby)

- Capacitive Touch Slider from TinyCircuits - $4.95

- 200 mm 5 Pin Wiring Cable - $1.99

- Microphone - $19.95

- Raspberry Pi 4, breadboard, wires - provided by lab

Total: $56.89

References

PortAudio LibraryPortAudio Example Patest_wire.c

Professor Hunter Adams' FFT Handout

ATtiny841 Datasheet

Capacative Touch Slider Arduino Library

I2C on Raspberry Pi

Example Code: Serial Read in C

Poll Function Documentation

Using Poll vs PPoll

Example Using Poll Function

Audio Recommendations for Raspberry Pi

Code

audio_process_v2.c

Audio_process_v2 is our main program which process the audio for pitch shifting. In the wireCallback() function, we collect audio from the stream, perform an FFT to go from the time domain to the frequency domain, we get the location of a touch on the touch slider via USB serial from the Arduino, based on the maximum frequency of the FFT and the location of the touch slider we shift the FFT, we perform the inverse FFT to return to the time domain, and we send the processed data to the speakers.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 198 199 200 201 202 203 204 205 206 207 208 209 210 211 212 213 214 215 216 217 218 219 220 221 222 223 224 225 226 227 228 229 230 231 232 233 234 235 236 237 238 239 240 241 242 243 244 245 246 247 248 249 250 251 252 253 254 255 256 257 258 259 260 261 262 263 264 265 266 267 268 269 270 271 272 273 274 275 276 277 278 279 280 281 282 283 284 285 286 287 288 289 290 291 292 293 294 295 296 297 298 299 300 301 302 303 304 305 306 307 308 309 310 311 312 313 314 315 316 317 318 319 320 321 322 323 324 325 326 327 328 329 330 331 332 333 334 335 336 337 338 339 340 341 342 343 344 345 346 347 348 349 350 351 352 353 354 355 356 357 358 359 360 361 362 363 364 365 366 367 368 369 370 371 372 373 374 375 376 377 378 379 380 381 382 383 384 385 386 387 388 389 390 391 392 393 394 395 396 397 398 399 400 401 402 403 404 405 406 407 408 409 410 411 412 413 414 415 416 417 418 419 420 421 422 423 424 425 426 427 428 429 430 431 432 433 434 435 436 437 438 439 440 441 442 443 444 445 446 447 448 449 450 451 452 453 454 455 456 457 458 459 460 461 462 463 464 465 466 467 468 469 470 471 472 473 474 475 476 477 478 479 480 481 482 483 484 485 486 487 488 489 490 491 492 493 494 495 496 497 498 499 500 501 502 503 504 505 506 507 508 509 510 511 512 513 514 515 516 517 518 519 520 521 522 523 524 525 526 527 528 529 530 531 532 533 534 535 536 537 538 539 540 541 542 543 544 545 546 547 548 549 550 551 552 553 554 555 556 557 558 559 560 561 562 563 564 565 566 567 568 569 570 571 572 573 574 575 576 577 578 579 580 581 582 583 584 585 586 587 588 589 590 591 592 593 594 595 596 597 598 599 600 601 602 603 604 605 606 607 608 609 610 611 612 613 614 615 616 617 618 619 620 621 622 623 624 625 626 627 628 629 630 631 632 633 634 635 636 637 638 639 640 641 642 643 644 645 646 647 648 649 650 651 652 653 654 655 656 657 658 659 660 661 662 663 664 665 666 667 668 669 670 671 672 673 674 675 676 677 678 679 680 681 682 683 684 685 686 687 688 689 690 691 692 693 694 695 696 697 698 699 700 701 702 703 704 705 706 707 708 709 710 711 712 713 714 715 716 717 718 719 720 721 722 723 724 725 726 727 728 729 730 731 732 733 734 735 736 737 738 739 740 741 742 743 744 745 746 747 748 749 750 751 752 753 754 755 756 757 758 759 760 761 762 763 764 765 766 767 768 769 770 771 772 773 | /* * * ECE 5725 FINAL PROJECT 2024 * HENRY GELLER (hng8) and BOBBY HAIG (rdh247) * LAB GROUP 13 (WED) * */ #include <errno.h> #include <fcntl.h> #include <stdio.h> #include <stdlib.h> #include <string.h> #include <termios.h> #include <unistd.h> #include <poll.h> #include <math.h> #include "portaudio.h" #define SAMPLE_RATE (44100) //Configurations for portAudio Stream typedef struct WireConfig_s { int isInputInterleaved; int isOutputInterleaved; int numInputChannels; int numOutputChannels; int framesPerCallback; /* count status flags */ int numInputUnderflows; int numInputOverflows; int numOutputUnderflows; int numOutputOverflows; int numPrimingOutputs; int numCallbacks; } WireConfig_t; #define USE_FLOAT_INPUT (1) #define USE_FLOAT_OUTPUT (1) /* Latencies set to defaults. */ #if USE_FLOAT_INPUT #define INPUT_FORMAT paFloat32 typedef float INPUT_SAMPLE; #else #define INPUT_FORMAT paInt16 typedef short INPUT_SAMPLE; #endif #if USE_FLOAT_OUTPUT #define OUTPUT_FORMAT paFloat32 typedef float OUTPUT_SAMPLE; #else #define OUTPUT_FORMAT paInt16 typedef short OUTPUT_SAMPLE; #endif // create custom data type for fixed point arithmetic used in FFT typedef signed int fix; double gInOutScaler = 1.0; #define CONVERT_IN_TO_OUT(in) ((OUTPUT_SAMPLE) ((in) * gInOutScaler)) #define float2fix(a) ((fix)(a * 32768.0)) #define fix2float(a) ((float)(a) / 32768.0) #define multfix(a,b) ((fix)((signed long long) a )*((signed long long) b ) >> 15) //USB Microphone & Audio Jack Speakers detected here #define INPUT_DEVICE (Pa_GetDefaultInputDevice()) #define OUTPUT_DEVICE (Pa_GetDefaultOutputDevice()) //Used in FFT calculation - based on our project using 4096 frames #define LOG2_FRAMES (12) #define SHIFT_AMOUNT (4) //Arduino device name. Used to receive touch slider data via serial #define TERMINAL "/dev/ttyACM0" //Define empty array that will be populated with detected frequencies //Used to calculate the mode of past 5 data points to smooth out response int det[] = {0,0,0,0,0}; static PaError TestConfiguration( WireConfig_t *config ); // serial communication setup functions int set_interface_attribs(int fd, int speed) { struct termios tty; if (tcgetattr(fd, &tty) < 0) { printf("Error from tcgetattr: %s\n", strerror(errno)); return -1; } cfsetospeed(&tty, (speed_t)speed); cfsetispeed(&tty, (speed_t)speed); tty.c_cflag |= (CLOCAL | CREAD); /* ignore modem controls */ tty.c_cflag &= ~CSIZE; tty.c_cflag |= CS8; /* 8-bit characters */ tty.c_cflag &= ~PARENB; /* no parity bit */ tty.c_cflag &= ~CSTOPB; /* only need 1 stop bit */ tty.c_cflag &= ~CRTSCTS; /* no hardware flowcontrol */ //setup for non-canonical mode tty.c_iflag &= ~(IGNBRK | BRKINT | PARMRK | ISTRIP | INLCR | IGNCR | ICRNL | IXON); tty.c_lflag &= ~(ECHO | ECHONL | ICANON | ISIG | IEXTEN); tty.c_oflag &= ~OPOST; //fetch bytes as they become available tty.c_cc[VMIN] = 1; tty.c_cc[VTIME] = 1; if (tcsetattr(fd, TCSANOW, &tty) != 0) { printf("Error from tcsetattr: %s\n", strerror(errno)); return -1; } return 0; } void set_mincount(int fd, int mcount) { struct termios tty; if (tcgetattr(fd, &tty) < 0) { printf("Error tcgetattr: %s\n", strerror(errno)); return; } tty.c_cc[VMIN] = mcount ? 1 : 0; tty.c_cc[VTIME] = 5; /* half second timer */ if (tcsetattr(fd, TCSANOW, &tty) < 0) printf("Error tcsetattr: %s\n", strerror(errno)); } // end serial communication setup functions //Define Callback function as required by portAudio //Callback is called by the portAudio Stream when audio data is available //Stream passes audio data through inputBuffer, and expects processed date in outputBuffer //We use the callback function to: //1. Perform the FFT (to go from time domain to frequency domain) //2. Receive touch slider data via serial //3. Shift FFT based on touch slider data, recorded frequency, and mapping //4. Perform iFFT (to go from frequency domain to time tomain) //5. Provide the outputBuffer with processed data static int wireCallback( const void *inputBuffer, void *outputBuffer, unsigned long framesPerBuffer, const PaStreamCallbackTimeInfo* timeInfo, PaStreamCallbackFlags statusFlags, void *userData ); static int wireCallback( const void *inputBuffer, void *outputBuffer, unsigned long framesPerBuffer, const PaStreamCallbackTimeInfo* timeInfo, PaStreamCallbackFlags statusFlags, void *userData ) { INPUT_SAMPLE *in; OUTPUT_SAMPLE *out; int inStride; int outStride; int inDone = 0; int outDone = 0; WireConfig_t *config = (WireConfig_t *) userData; unsigned int n; int inChannel, outChannel; int fd; char *portname = TERMINAL; //File descriptor for reading serial from Arduino via USB fd = open(portname, O_RDONLY | O_NOCTTY | O_SYNC); /* This may get called with NULL inputBuffer during initial setup. */ if( inputBuffer == NULL) return 0; /* Count flags */ if( (statusFlags & paInputUnderflow) != 0 ) config->numInputUnderflows += 1; if( (statusFlags & paInputOverflow) != 0 ) config->numInputOverflows += 1; if( (statusFlags & paOutputUnderflow) != 0 ) config->numOutputUnderflows += 1; if( (statusFlags & paOutputOverflow) != 0 ) config->numOutputOverflows += 1; if( (statusFlags & paPrimingOutput) != 0 ) config->numPrimingOutputs += 1; config->numCallbacks += 1; //Define sin(x) and convert to fix type for FFT fix Sinewave[framesPerBuffer]; for (int n=0; n < framesPerBuffer; n++) { Sinewave[n] = float2fix(sin(6.283*((float)(n) / framesPerBuffer))); } inChannel=0, outChannel=0; if( config->isInputInterleaved ) { in = ((INPUT_SAMPLE*)inputBuffer) + inChannel; inStride = config->numInputChannels; } else { in = ((INPUT_SAMPLE**)inputBuffer)[inChannel]; inStride = 1; } if( config->isOutputInterleaved ) { out = ((OUTPUT_SAMPLE*)outputBuffer) + outChannel; outStride = config->numOutputChannels; } else { out = ((OUTPUT_SAMPLE**)outputBuffer)[outChannel]; outStride = 1; } //Convert data in the input buffer from float to fix fix fr[framesPerBuffer]; fix fi[framesPerBuffer]; for ( n=0; n < framesPerBuffer; n++) { fr[n] = float2fix(*in); fi[n] = 0; in++; } // start FFT calculation unsigned short m; // one of the indices being swapped unsigned short mr ; // the other index being swapped (r for reversed) fix tr, ti ; // for temporary storage while swapping, and during iteration int i, j ; // indices being combined in Danielson-Lanczos part of the algorithm int L ; // length of the FFT's being combined int k ; // used for looking up trig values from sine table int istep ; // length of the FFT which results from combining two FFT's fix wr, wi ; // trigonometric values from lookup table fix qr, qi ; // temporary variables used during DL part of the algorithm // Bit reversal code for (m=1; m < (framesPerBuffer - 1); m++) { // swap odd and even bits mr = ((m >> 1) & 0x5555) | ((m & 0x5555) << 1); // swap consecutive pairs mr = ((mr >> 2) & 0x3333) | ((mr & 0x3333) << 2); // swap nibbles ... mr = ((mr >> 4) & 0x0F0F) | ((mr & 0x0F0F) << 4); // swap bytes mr = ((mr >> 8) & 0x00FF) | ((mr & 0x00FF) << 8); // shift down mr mr >>= SHIFT_AMOUNT ; // don't swap that which has already been swapped if (mr<=m) continue ; // swap the bit-reveresed indices tr = fr[m] ; fr[m] = fr[mr] ; fr[mr] = tr ; ti = fi[m] ; fi[m] = fi[mr] ; fi[mr] = ti ; } //Danielson-Lanczos // Length of the FFT's being combined (starts at 1) L = 1 ; // Log2 of number of samples, minus 1 k = LOG2_FRAMES - 1 ; // While the length of the FFT's being combined is less than the number of gathered samples while (L < framesPerBuffer) { // Determine the length of the FFT which will result from combining two FFT's istep = L<<1 ; // For each element in the FFT's that are being combined . . . for (m=0; m<L; ++m) { // Lookup the trig values for that element j = m << k ; // index of the sine table wr = Sinewave[j + framesPerBuffer/4] ; // cos(2pi m/N) wi = -Sinewave[j] ; // sin(2pi m/N) wr >>= 1 ; // divide by two wi >>= 1 ; // divide by two // i gets the index of one of the FFT elements being combined for (i=m; i<framesPerBuffer; i+=istep) { // j gets the index of the FFT element being combined with i j = i + L ; // compute the trig terms (bottom half of the above matrix) tr = multfix(wr, fr[j]) - multfix(wi, fi[j]) ; ti = multfix(wr, fi[j]) + multfix(wi, fr[j]) ; // divide ith index elements by two (top half of above matrix) qr = fr[i]>>1 ; qi = fi[i]>>1 ; // compute the new values at each index fr[j] = qr - tr ; fi[j] = qi - ti ; fr[i] = qr + tr ; fi[i] = qi + ti ; } } --k ; L = istep ; } // end FFT calculation, array fr contains output of DFT // serial input //Setup for polling the file descriptor struct pollfd poll_var[1]; poll_var[0].fd = fd; poll_var[0].events = POLLIN; unsigned char buf[2]; ssize_t rdlen; tcflush(fd, TCIOFLUSH); //Poll for 20ms. If there is data to read from touch slider, ret=1 and we read the data //If there is not data to read, we return 0 to the callback which restarts it with new input data int ret = poll(poll_var, (unsigned long)1, 20); if (ret > 0) { rdlen = read(fd, buf, 2); } else { //No data to read from touch-slider. We want no speaker output //Set out to 0 for all frames and restart callback for ( n=0; n < framesPerBuffer; n++) { *out = 0.0; out++; } return 0; //restart callback } //end serial input // detection of peak frequency fix maxMag = -100; float maxFreq = 0; int maxInd = 0; for ( n=15; n < 45; n++) { if (fr[n] > maxMag) { maxFreq = n * (44100.0 / 4096.0); maxMag = fr[n]; maxInd = n; } } // peak arbitration det[4] = det[3]; det[3] = det[2]; det[2] = det[1]; det[1] = det[0]; det[0] = maxInd; //If frequency bins are within one of eachother, consolidate to limit flipping between bins if ((det[0] == det[1]) || (det[0] == det[1] - 1) || (det[0] == det[1] + 1)){ det[0] = det[1]; } if ((det[0] == det[2]) || (det[0] == det[2] - 1) || (det[0] == det[2] + 1)){ det[0] = det[2]; } if ((det[0] == det[3]) || (det[0] == det[3] - 1) || (det[0] == det[3] + 1)){ det[0] = det[3]; } if ((det[0] == det[4]) || (det[0] == det[4] - 1) || (det[0] == det[4] + 1)){ det[0] = det[4]; } int p = 0; // mode //Calculate the mode of the previous five maximum frequency points +-1 if ((det[0]==det[1]) && (det[0]==det[2])) { p = det[0]; } else if ((det[0]==det[1]) && (det[0]==det[3])) { p = det[0]; } else if ((det[0]==det[1]) && (det[0]==det[4])) { p = det[0]; } else if ((det[0]==det[2]) && (det[0]==det[3])) { p = det[0]; } else if ((det[0]==det[2]) && (det[0]==det[4])) { p = det[0]; } else if ((det[0]==det[3]) && (det[0]==det[4])) { p = det[0]; } else if ((det[1]==det[2]) && (det[1]==det[3])) { p = det[1]; } else if ((det[1]==det[2]) && (det[1]==det[4])) { p = det[1]; } else if ((det[1]==det[3]) && (det[1]==det[4])) { p = det[1]; } else if ((det[2]==det[3]) && (det[2]==det[4])) { p = det[2]; } else { p = det[0]; } // calculation of pitch shift amount using the mode of the previous 5 measurements as the detected max frequency // uses a mapping based on the trumpet's range as pitch shifting is not linear int shift; if (*buf <= 0x11){ // major 2nd if ((p==15)||(p==16)||(p==17)||(p==21)){ shift = 2; } if ((p==18)||(p==19)||(p==20)||(p==22)||(p==23)||(p==25)||(p==26)||(p==28)||(p==29)) { shift = 3; } if ((p==24)||(p==27)||(p==31)||(p==33)||(p==35)||(p==37)||(p==42)) { shift = 4; } if ((p==30)||(p==32)||(p==34)||(p==36)||(p==39)||(p==41)||(p==44)||(p==47)||(p==50)||(p==53)) { shift = 5; } if ((p==38)||(p==40)||(p==43)||(p==46)||(p==49)||(p==52)||(p==56)||(p==59)||(p==63)) { shift = 6; } if ((p==45)||(p==48)||(p==51)||(p==55)||(p==58)||(p==62)||(p==66)||(p==70)) { shift = 7; } if ((p==54)||(p==57)||(p==61)||(p==65)||(p==69)||(p==74)||(p==79)||(p==84)) { shift = 8; } if ((p==60)||(p==64)||(p==68)||(p==73)||(p==78)||(p==83)||(p==89)) { shift = 9; } if ((p==67)||(p==72)||(p==77)||(p==82)||(p==88)||(p==94)||(p==100)) { shift = 10; } if ((p==71)||(p==76)||(p==81)||(p==87)||(p==93)||(p==99)) { shift = 11; } if ((p==75)||(p==80)||(p==86)||(p==92)||(p==98)) { shift = 12; } if ((p==85)||(p==91)||(p==97)) { shift = 13; } if ((p==90)||(p==96)) { shift = 14; } if (p==95) { shift = 15; } } else if (*buf <= 0x22){ // minor 3rd if ((p==15)||(p==16)||(p==17)||(p==21)){ shift = 3; } if ((p==18)||(p==19)||(p==20)||(p==22)||(p==23)||(p==25)||(p==26)||(p==28)) { shift = 4; } if ((p==24)||(p==27)||(p==29)||(p==31)||(p==33)) { shift = 5; } if ((p==30)||(p==32)||(p==35)||(p==37)) { shift = 6; } if ((p==34)||(p==36)||(p==39)||(p==42)) { shift = 7; } if ((p==38)||(p==41)||(p==44)||(p==47)||(p==50)||(p==53)) { shift = 8; } if ((p==40)||(p==43)||(p==46)||(p==49)||(p==52)||(p==56)||(p==59)||(p==63)) { shift = 9; } if ((p==45)||(p==48)||(p==51)||(p==55)||(p==58)||(p==62)) { shift = 10; } if ((p==54)||(p==57)||(p==61)||(p==66)) { shift = 11; } if ((p==60)||(p==65)||(p==70)) { shift = 12; } if ((p==64)||(p==69)||(p==74)||(p==79)||(p==84)) { shift = 13; } if ((p==68)||(p==73)||(p==78)||(p==83)||(p==89)) { shift = 14; } if ((p==67)||(p==72)||(p==77)||(p==82)||(p==88)) { shift = 15; } if ((p==71)||(p==76)||(p==81)||(p==87)||(p==94)||(p==100)) { shift = 16; } if ((p==75)||(p==80)||(p==86)||(p==93)||(p==99)) { shift = 17; } if ((p==85)||(p==92)||(p==98)) { shift = 18; } if ((p==91)||(p==97)) { shift = 19; } if ((p==90)||(p==96)) { shift = 20; } if (p==95) { shift = 21; } } else if (*buf <= 0x33){ // major 3rd if ((p==15)||(p==16)) { shift = 4; } if ((p==17)||(p==18)||(p==19)||(p==21)||(p==22)||(p==25)) { shift = 5; } if ((p==20)||(p==23)||(p==24)||(p==26)||(p==28)) { shift = 6; } if ((p==27)||(p==29)||(p==31)) { shift = 7; } if ((p==30)||(p==33)||(p==35)) { shift = 8; } if ((p==32)||(p==34)||(p==37)) { shift = 9; } if ((p==36)||(p==39)||(p==42)) { shift = 10; } if ((p==38)||(p==41)||(p==44)||(p==47)||(p==50)) { shift = 11; } if ((p==40)||(p==43)||(p==46)||(p==49)||(p==53)||(p==56)) { shift = 12; } if ((p==45)||(p==48)||(p==52)||(p==55)||(p==59)) { shift = 13; } if ((p==51)||(p==54)||(p==58)||(p==63)) { shift = 14; } if ((p==57)||(p==62)) { shift = 15; } if ((p==61)||(p==66)) { shift = 16; } if ((p==60)||(p==65)||(p==70)) { shift = 17; } if ((p==64)||(p==69)||(p==74)||(p==79)) { shift = 18; } if ((p==68)||(p==73)||(p==78)||(p==84)) { shift = 19; } if ((p==67)||(p==72)||(p==77)||(p==83)) { shift = 20; } if ((p==71)||(p==76)||(p==82)||(p==89)) { shift = 21; } if ((p==75)||(p==81)||(p==88)||(p==94)||(p==100)) { shift = 22; } if ((p==80)||(p==87)||(p==93)||(p==99)) { shift = 23; } if ((p==86)||(p==92)||(p==98)) { shift = 24; } if ((p==85)||(p==91)||(p==97)) { shift = 25; } if ((p==90)||(p==96)) { shift = 26; } if (p==95) { shift = 27; } } else if (*buf <= 0x43){ // perfect 4th if ((p==15)||(p==16)||(p==17)) { shift = 6; } if ((p==18)||(p==19)||(p==21)||(p==22)) { shift = 7; } if ((p==20)||(p==23)||(p==25)) { shift = 8; } if ((p==24)||(p==26)||(p==28)) { shift = 9; } if ((p==27)||(p==29)||(p==31)) { shift = 10; } if ((p==30)||(p==33)||(p==35)) { shift = 11; } if ((p==32)||(p==34)||(p==37)) { shift = 12; } if ((p==36)||(p==39)||(p==42)) { shift = 13; } if ((p==38)||(p==41)||(p==44)) { shift = 14; } if ((p==40)||(p==43)||(p==47)||(p==50)) { shift = 15; } if ((p==46)||(p==49)||(p==53)) { shift = 16; } if ((p==45)||(p==48)||(p==52)||(p==56)) { shift = 17; } if ((p==51)||(p==55)||(p==59)) { shift = 18; } if ((p==54)||(p==58)||(p==63)) { shift = 19; } if ((p==57)||(p==62)) { shift = 20; } if ((p==61)||(p==66)) { shift = 21; } if ((p==60)||(p==65)||(p==70)) { shift = 22; } if ((p==64)||(p==69)) { shift = 23; } if ((p==68)||(p==74)||(p==79)) { shift = 24; } if ((p==67)||(p==73)||(p==78)) { shift = 25; } if ((p==72)||(p==77)||(p==84)) { shift = 26; } if ((p==71)||(p==76)||(p==83)||(p==89)) { shift = 27; } if ((p==75)||(p==82)||(p==88)||(p==94)) { shift = 28; } if ((p==81)||(p==87)||(p==93)) { shift = 29; } if ((p==80)||(p==86)||(p==92)||(p==100)) { shift = 30; } if ((p==85)||(p==91)||(p==99)) { shift = 31; } if ((p==90)||(p==98)) { shift = 32; } if (p==97) { shift = 33; } if (p==96) { shift = 34; } if (p==95) { shift = 35; } } else if (*buf <= 0x53){ // perfect 5th if (p==15) { shift = 8; } if ((p==16)||(p==17)) { shift = 9; } if ((p==18)||(p==19)||(p==21)) { shift = 10; } if ((p==20)||(p==22)) { shift = 11; } if ((p==23)||(p==25)) { shift = 12; } if ((p==24)||(p==26)||(p==28)) { shift = 13; } if (p==14) { shift = 14; } if ((p==29)||(p==31)) { shift = 15; } if ((p==30)||(p==33)) { shift = 16; } if ((p==32)||(p==35)) { shift = 17; } if ((p==34)||(p==37)) { shift = 18; } if ((p==36)||(p==39)) { shift = 19; } if ((p==38)||(p==42)) { shift = 20; } if ((p==41)||(p==44)) { shift = 21; } if ((p==40)||(p==43)||(p==47)) { shift = 22; } if ((p==46)||(p==50)) { shift = 23; } if ((p==45)||(p==49)||(p==53)) { shift = 24; } if ((p==48)||(p==52)) { shift = 25; } if ((p==51)||(p==56)) { shift = 26; } if (p==55) { shift = 27; } if ((p==54)||(p==59)) { shift = 28; } if ((p==58)||(p==63)) { shift = 29; } if ((p==57)||(p==62)) { shift = 30; } if (p==61) { shift = 31; } if ((p==60)||(p==66)) { shift = 32; } if ((p==65)||(p==70)) { shift = 33; } if ((p==64)||(p==69)) { shift = 34; } if (p==68) { shift = 35; } if ((p==67)||(p==74)) { shift = 36; } if ((p==73)||(p==79)) { shift = 37; } if ((p==72)||(p==78)||(p==84)) { shift = 38; } if ((p==71)||(p==77)||(p==83)) { shift = 39; } if ((p==76)||(p==82)) { shift = 40; } if ((p==75)||(p==81)||(p==89)) { shift = 41; } if ((p==80)||(p==88)) { shift = 42; } if ((p==87)||(p==94)) { shift = 43; } if ((p==86)||(p==93)) { shift = 44; } if ((p==85)||(p==92)) { shift = 45; } if ((p==91)||(p==100)) { shift = 46; } if ((p==90)||(p==99)) { shift = 47; } if (p==98) { shift = 48; } if (p==97) { shift = 49; } if (p==96) { shift = 50; } if (p==95) { shift = 51; } } else{ // octave shift = p; } // pitch-shift fix fr_s[framesPerBuffer]; fix fi_s[framesPerBuffer]; for ( n=0; n < framesPerBuffer; n++) { if ((n < 15) || (n < shift)) { fr_s[n] = 0; fi_s[n] = 0; } else { fr_s[n] = fr[n - shift]; fi_s[n] = fi[n - shift]; } } // inverse FFT - back to time domain with shifted FFT for (m=1; m < (framesPerBuffer - 1); m++) { // swap odd and even bits mr = ((m >> 1) & 0x5555) | ((m & 0x5555) << 1); // swap consecutive pairs mr = ((mr >> 2) & 0x3333) | ((mr & 0x3333) << 2); // swap nibbles ... mr = ((mr >> 4) & 0x0F0F) | ((mr & 0x0F0F) << 4); // swap bytes mr = ((mr >> 8) & 0x00FF) | ((mr & 0x00FF) << 8); // shift down mr mr >>= SHIFT_AMOUNT ; // don't swap that which has already been swapped if (mr<=m) continue ; // swap the bit-reversed indices tr = fr_s[m] ; fr_s[m] = fr_s[mr] ; fr_s[mr] = tr ; ti = fi_s[m] ; fi_s[m] = fi_s[mr] ; fi_s[mr] = ti ; } //Danielson-Lanczos // Length of the FFT's being combined (starts at 1) L = 1 ; // Log2 of number of samples, minus 1 k = LOG2_FRAMES - 1 ; // While the length of the FFT's being combined is less than the number of gathered samples while (L < framesPerBuffer) { // Determine the length of the FFT which will result from combining two FFT's istep = L<<1 ; // For each element in the FFT's that are being combined . . . for (m=0; m<L; ++m) { // Lookup the trig values for that element j = m << k ; // index of the sine table wr = Sinewave[j + framesPerBuffer/4] ; // cos(2pi m/N) wi = Sinewave[j] ; // sin(2pi m/N) wr >>= 1 ; // divide by two wi >>= 1 ; // divide by two // i gets the index of one of the FFT elements being combined for (i=m; i<framesPerBuffer; i+=istep) { // j gets the index of the FFT element being combined with i j = i + L ; // compute the trig terms (bottom half of the above matrix) tr = multfix(wr, fr_s[j]) - multfix(wi, fi_s[j]) ; ti = multfix(wr, fi_s[j]) + multfix(wi, fr_s[j]) ; // divide ith index elements by two (top half of above matrix) qr = fr_s[i]; qi = fi_s[i]; // compute the new values at each index fr_s[j] = qr - tr ; fi_s[j] = qi - ti ; fr_s[i] = qr + tr ; fi_s[i] = qi + ti ; } } --k ; L = istep ; } // end inverse FFT calculation, fr_s now holds result in = ((INPUT_SAMPLE**)inputBuffer)[inChannel]; for ( n=0; n < framesPerBuffer; n++) { *out = fix2float(20*fr_s[n]); out++; in++; } close(fd); return 0; } // end of callback function /*******************************************************************/ int main(void); int main(void) { PaError err = paNoError; WireConfig_t CONFIG; WireConfig_t *config = &CONFIG; int configIndex = 0;; err = Pa_Initialize(); if( err != paNoError ) goto error; if( INPUT_FORMAT == OUTPUT_FORMAT ) { gInOutScaler = 1.0; } else if( (INPUT_FORMAT == paInt16) && (OUTPUT_FORMAT == paFloat32) ) { gInOutScaler = 1.0/32768.0; } else if( (INPUT_FORMAT == paFloat32) && (OUTPUT_FORMAT == paInt16) ) { gInOutScaler = 32768.0; } config->isInputInterleaved = 0; config->isOutputInterleaved = 0; config->numInputChannels = 1; config->numOutputChannels = 1; config->framesPerCallback = 4096; err = TestConfiguration( config ); /* Give user a chance to bail out. */ if( err == 1 ) { err = paNoError; goto done; } else if( err != paNoError ) goto error; done: Pa_Terminate(); printf("Full duplex sound test complete.\n"); fflush(stdout); printf("Hit ENTER to quit.\n"); fflush(stdout); getchar(); return 0; error: Pa_Terminate(); fprintf( stderr, "An error occured while using the portaudio stream\n" ); fprintf( stderr, "Error number: %d\n", err ); fprintf( stderr, "Error message: %s\n", Pa_GetErrorText( err ) ); printf("Hit ENTER to quit.\n"); fflush(stdout); getchar(); return -1; } static PaError TestConfiguration( WireConfig_t *config ) { int c; PaError err = paNoError; PaStream *stream; PaStreamParameters inputParameters, outputParameters; inputParameters.device = INPUT_DEVICE; /* default input device */ if (inputParameters.device == paNoDevice) { fprintf(stderr,"Error: No default input device.\n"); goto error; } inputParameters.channelCount = config->numInputChannels; inputParameters.sampleFormat = INPUT_FORMAT | (config->isInputInterleaved ? 0 : paNonInterleaved); inputParameters.suggestedLatency = Pa_GetDeviceInfo( inputParameters.device )->defaultLowInputLatency; inputParameters.hostApiSpecificStreamInfo = NULL; outputParameters.device = OUTPUT_DEVICE; /* default output device */ if (outputParameters.device == paNoDevice) { fprintf(stderr,"Error: No default output device.\n"); goto error; } //Stream configuration outputParameters.channelCount = config->numOutputChannels; outputParameters.sampleFormat = OUTPUT_FORMAT | (config->isOutputInterleaved ? 0 : paNonInterleaved); outputParameters.suggestedLatency = Pa_GetDeviceInfo( outputParameters.device )->defaultLowOutputLatency; outputParameters.hostApiSpecificStreamInfo = NULL; config->numInputUnderflows = 0; config->numInputOverflows = 0; config->numOutputUnderflows = 0; config->numOutputOverflows = 0; config->numPrimingOutputs = 0; config->numCallbacks = 0; //Opens stream that continuously calls wireCallback() err = Pa_OpenStream( &stream, &inputParameters, &outputParameters, SAMPLE_RATE, config->framesPerCallback, /* frames per buffer */ paClipOff, /* we won't output out of range samples so don't bother clipping them */ wireCallback, config ); if( err != paNoError ) goto error; err = Pa_StartStream( stream ); if( err != paNoError ) goto error; // serial communication setup char *portname = TERMINAL; int fd; int wlen; char *xstr = "Hello!\n"; int xlen = strlen(xstr); fd = open(portname, O_RDWR | O_NOCTTY | O_SYNC); if (fd < 0) { printf("Error opening %s: %s\n", portname, strerror(errno)); return -1; } set_interface_attribs(fd, B9600); //Baud rate 9600 to match the Arduino /* simple output */ wlen = write(fd, xstr, xlen); if (wlen != xlen) { printf("Error from write: %d, %d\n", wlen, errno); } tcdrain(fd); /* delay for output */ // end serial communication setup fflush(stdout); c = getchar(); err = Pa_CloseStream( stream ); if( err != paNoError ) goto error; #define CHECK_FLAG_COUNT(member) \ if( config->member > 0 ) printf("FLAGS SET: " #member " = %d\n", config->member ); CHECK_FLAG_COUNT( numInputUnderflows ); CHECK_FLAG_COUNT( numInputOverflows ); CHECK_FLAG_COUNT( numOutputUnderflows ); CHECK_FLAG_COUNT( numOutputOverflows ); CHECK_FLAG_COUNT( numPrimingOutputs ); printf("number of callbacks = %d\n", config->numCallbacks ); if( c == 'q' ) return 1; error: return err; } |

Cap_Touch_Basic.ino

Cap_Touch_Basic.ino is part of the Arduino library for the capacitive touch slider that we purchased from tinycircuits. The program reads I2C input from the capacitive touch slider, which is in the form of a location between 1 and 100. We edited to code to be able to successfully send it via USB to the raspberry pi so that we can use the touch location for pitch shifting.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 | //------------------------------------------------------------------------------- // Implementation of TinyCircuits Capacitive Touch Slider Example // Example by Ben Rose, TinyCircuits http://tinycircuits.com // Edited by Henry Geller & Bobby Haig //------------------------------------------------------------------------------- #include <Wire.h> #include <Wireling.h> #include <ATtiny841Lib.h> #include <CapTouchWireling.h> CapTouchWireling capTouch; #if defined (ARDUINO_ARCH_AVR) #elif defined(ARDUINO_ARCH_SAMD) #endif int capTouchPort = 0; void setup() { SerialMonitorInterface.begin(9600); Wire.begin(); Wireling.begin(); Wireling.selectPort(capTouchPort); while (!SerialMonitorInterface && millis() < 5000); if (capTouch.begin()) { SerialMonitorInterface.println("Capacitive touch Wireling not detected!"); } } void loop() { bool completedTouch = capTouch.update(); if (capTouch.isTouched()) { int pos = capTouch.getPosition(); //getPosition() returns a position value from 0 to 100 across the sensor from I2C //Writes to the USB where the Raspberry Pi can read it in the callback function Serial.write(pos); } |